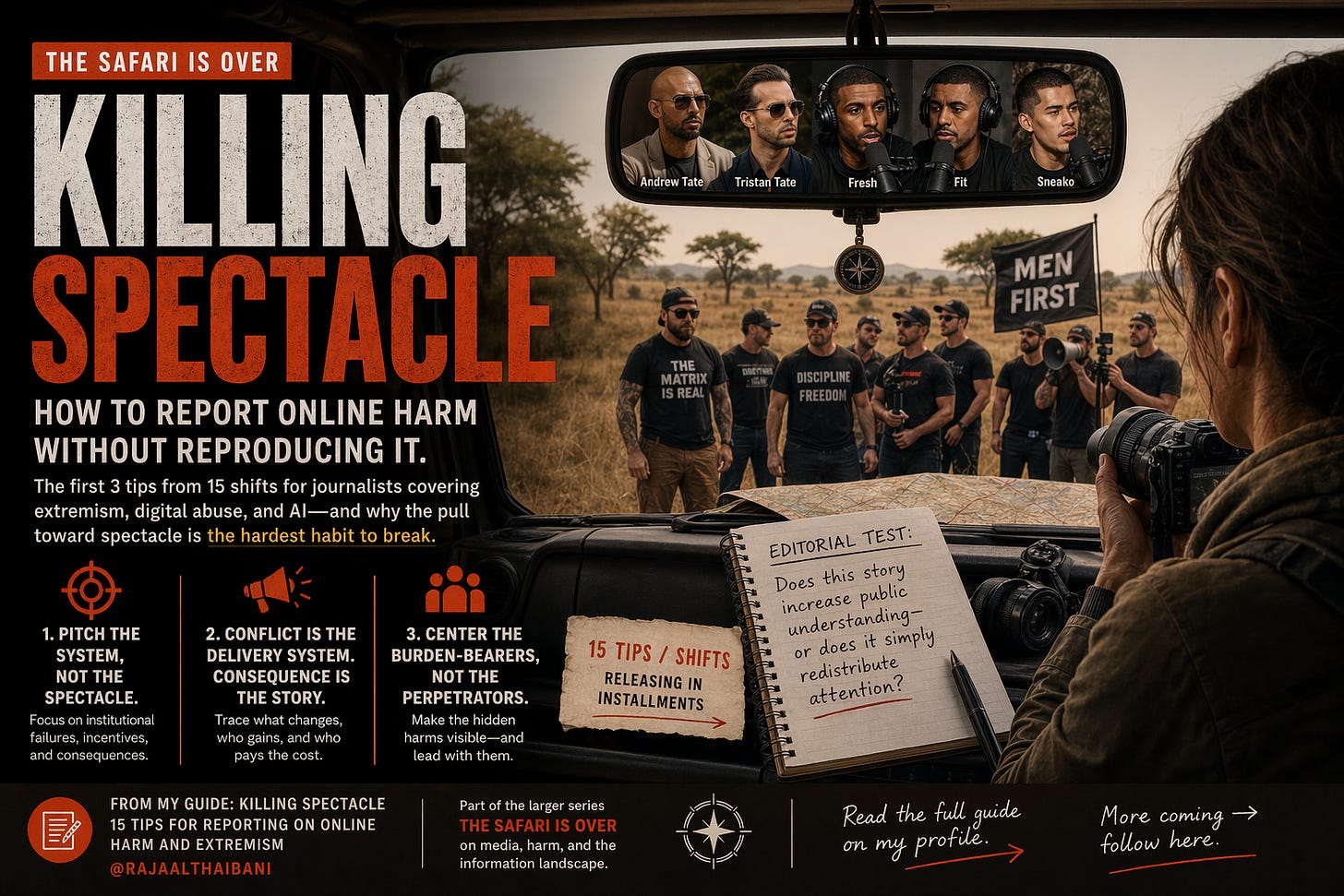

Killing Spectacle (Part 1): Three shifts for journalists reporting on online harm and extremism

[Shifts 1–3 of 15] Frame the story right: pitch the system, report the consequence, center the burden-bearers.

From Killing Spectacle: 15 Shifts for Reporting on Online Harm and Extremism, a guide for journalists, editors, producers, researchers, and media practitioners. Published here in parts. Each installment stands alone. The full picture builds across the series.

Killing Spectacle is part of ‘The Safari Is Over’, an ongoing series on media, harm, and the information landscape.

There’s a moment most journalists on this beat recognize, even if they don’t name it.

You’re pitching a story about a harmful online community, an AI abuse case, a radicalization pipeline. And somewhere in the process, the story migrates toward its most watchable part. The clip. The personality. The confrontation. The thing that makes an editor say: that.

It feels like journalism. It has texture and tension and a hook. But something has gone missing: the part that would actually change how the public understands what’s happening. The part that helps them identify where to direct action.

This guide is about that gap, and how to close it, deliberately.

What this guide is and isn’t

These are practices, not rules, drawn from years in this space, watching what holds and what collapses under scrutiny. They’re also shifts: things we have to actively unlearn before you can replace them with something more useful. Most of the bad instincts on this beat aren’t careless. They’re trained. They got rewarded. They got pieces commissioned and clips shared and panels booked. Unlearning them requires naming them first.

This is also a living document and a conversation. If you work on these beats— as a journalist, researcher, advocate, lawyer, technologist— what’s missing and what works belongs in here. Leave a comment or reach out directly. Everything useful goes into the guide, with credit.

How the full guide is structured

The 15 shifts are arranged across four phases: Framing (how you conceptualize and pitch the story before reporting begins), Rigor (how you verify and source in environments designed to mislead), Structure (how you shape and distribute the story without reproducing the harm), and Sustained Practice (how you protect and maintain this work inside institutions that don’t always reward it). The three shifts below are Phase 1. You don’t need to follow them sequentially, but the logic is cumulative, and each phase assumes the one before it.

The question that runs through all 15 shifts

Does this story increase public understanding, or does it redistribute attention?

Redistributing attention is easy. It generates clicks, and it can feel like exposure. But it rarely changes anything. Public understanding is harder to produce and harder to sell. It’s also the only thing journalism in this space can offer that the algorithm can’t.

What prompted this guide

This guide didn’t come from one story. It came from a pattern.

There is a structural problem in how online harm, extremism, and algorithmic culture get covered, and it shows up across formats, platforms, and beats. It’s not about bad journalists and storytellers. It’s about the gravitational pull of certain types of stories: ones that arrive pre-loaded with conflict, personality, and outrage, and make spectacle feel like investigation.

You know these stories.

The influencer radicalizing teenage boys, covered through his most inflammatory clips. The deepfake abuse case that leads with the viral moment and buries the policy vacuum. The AI system causing harm, reported as a curiosity rather than a systemic failure. The harassment campaign reconstructed around the abuser’s language rather than its cost to the target. The extremist community profiled through its most watchable members, never its recruitment infrastructure.

The Jihadi Johns and Andrew Tates—manufactured characters who understood exactly what the media would do with them, and built the spectacle, legitimacy, and reach they so desperately wanted accordingly. The alt-right figures who gamed outrage cycles to launder fringe ideology into mainstream debate. The misogynist podcasters whose revenue model runs on the very coverage claiming to expose them. The election disruptors who flood the zone knowing the correction will carry the lie further. The war crimes filmed and uploaded by perpetrators— state and non-state actors—because they knew journalists would distribute them.

In every case, the harmful actor understood the media environment better than the coverage did. And they know the media environment and its participants better than it knows itself.

These stories all have legitimate public interest at their core. The coverage often doesn’t find it because the spectacle is closer, faster, and easier to sell. That’s not a personal failure. It’s an editorial one.

And editorial choices can be made differently. This is a guide to making them differently: 15 shifts from spectacle-driven coverage to high-impact public interest reporting across online harm, extremism, AI, and algorithmic culture.

Shift 1: Pitch the system, not the spectacle

Unlearn: Anchor the pitch to a personality or incident.

Relearn: Pitch the system the incident reveals.

The most damaging instinct on this beat is reaching for a compelling figure or moment to sell a story. This is understandable, it’s how pitches get bought. But on beats involving harassment, extremism, or AI harm, incident-led coverage carries real costs: it re-traumatizes victims, amplifies the people causing harm, and normalizes what should remain structurally shocking. More practically: it produces stories that don’t survive the next news cycle. Incident-led coverage has a shelf life. System-led coverage has a subject.

What this looks like in practice

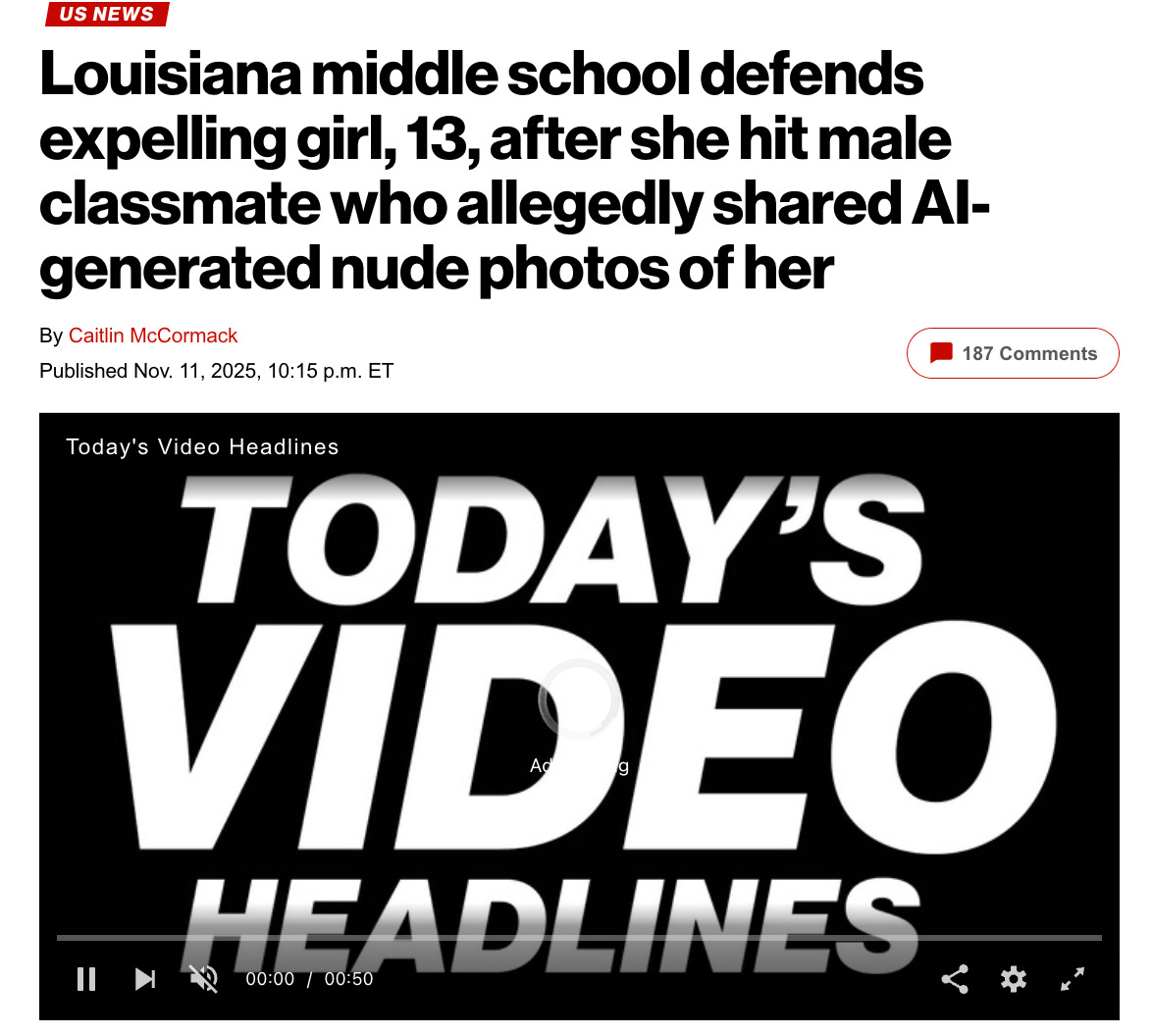

When AI-generated non-consensual images of a female student at a Louisiana middle school circulated last year, early coverage treated it as a conflict: a fight, an expulsion, a drama between teenagers. Readable. Thin.

Stronger reporting reframed the same facts around a policy vacuum: schools were applying outdated cyberbullying frameworks to a new type of harm and deepfake technology those laws never contemplated. No mechanism existed to respond, discipline, or protect. The incident was the entry point. The institution was the story.

One version ages out when the next incident lands. The other stays useful to every educator, parent, and policymaker who encounters the same gap. By focusing on the public-interest frame over spectacle, 8 more students were identified as victims of the same abuse, revealing a critical lack of oversight and accountability.

Editorial test:

Can you name the failing institution, platform, or policy in your first two paragraphs? If the story falls apart without the viral moment, you’re chasing spectacle.

Pitch frame to keep nearby:

The public needs to understand how [system] is responding to [harm] because it affects their ability to [protect themselves / hold someone accountable / make an informed decision].

Field note on AI and algorithmic beats specifically:

When a model or platform is implicated in harm, resist the pull toward anthropomorphizing the technology as the protagonist. The model isn’t the story. The decision to deploy it without safeguards, the gap between the terms of service and what the product actually does, the accountability structure that allowed the harm to scale, those are the story. Name the company. Name the design choice. Name the person who signed off.

Toolkit 1: Pitching the system when editors want the story

Before pitching, build a one-page map of the system you're investigating: the institution, the policy gap, the harm pathway, the people absorbing the cost. Kumu or a shared Miro board forces you to articulate the system before a word is written, and gives editors something concrete to look at beyond a clip or outrageous hook. AI-assisted document analysis (DocumentCloud Analyst, OCCRP Aleph) surfaces patterns across large document sets faster than manual reading. And maintain a running accountability file on the institutions on your beat (policy changes, enforcement actions, known gaps) so when an incident occurs, the system story is already half-reported.

*See my full guide and series for the deeper breakdown of this case and related tools.

Shift 2: Conflict is the delivery system. Consequence is the story.

Unlearn: Let the most dramatic moment become the unit of analysis.

Relearn: Treat conflict as the delivery system. Report the consequence.

Conflict generates heat, and heat generates clicks. But heat dissipates, and if you’ve only reported the confrontation, nothing survives the news cycle.

Covering consequence is different. Consequence is what changed. Who gained power, money, reach, or legitimacy. What the people most affected did next. How institutions responded or didn’t, or built in deniability so they wouldn’t have to.

What this looks like in practice

Podcast formats built around confrontation and humiliation, Fresh & Fit being a well-documented case, tend to get covered through their worst and most outrageous clips. That coverage is reliable and easy to produce. It also explains nothing.

But it doesn’t explain anything. It doesn’t tell you how the format generates revenue, how engagement mechanics reward escalation, how humiliation becomes entertainment, or what that does to the young men watching. Consequence-led reporting asks: what changed as a result of this? Who built a platform, a business, a political foothold? Who lost their safety, their sense of what’s normal? Who absorbed the cost?

That’s where the story lives.

Editorial test:

What happened after the conflict? Who gained, measurably (in reach, revenue, or legitimacy) and who absorbed the damage?

If you can’t answer both, the reporting isn’t finished.

Field note

Consequence is harder to report because it's often disaggregated and delayed. The influencer's revenue doesn't appear in the clip. The behavioral shift in the audience doesn't show up in the incident report. This is partly by design, platforms and bad actors alike benefit from keeping consequence invisible. Closing the gap requires a different sourcing strategy: researchers, platform transparency data, former employees, and the people most affected tracked over time, not quoted once. Phase 4 of this guide addresses the longitudinal obligation in full.

Toolkit 2: Following consequence when platforms obscure it

For platform and influencer revenue: Social Blade tracks estimated YouTube and Twitch earnings; SimilarWeb tracks audience growth and traffic sources; OpenCorporates surfaces corporate structures. For political influence: InfluenceMap. For moving from "here's the personality" to "here's the infrastructure": Gephi visualizes how content, accounts, and communities connect. For high-volume content beats, AI transcription (Whisper) and synthesis tools (NotebookLM) let you track rhetoric and escalation patterns across thousands of hours of material, the slow radicalization pipeline, not just the viral moment. And set calendar reminders at 3, 6, and 12 months after publication. The follow-up is itself a reporting method.

*See my full guide and series for the deeper breakdown of this case and related tools.

Shift 3: Center the burden-bearers, not the perpetrators

Unlearn: Let perpetrators set the terms of the story.

Relearn: Center the people who carry the long-term burden.

This is the structural failure that runs deepest, and the one most resistant to change because it’s baked into how stories get told.

On extremism, harassment, and abuse beats, perpetrators routinely set the frame. They’re quotable, accessible, often actively seeking coverage and eager for attention. Victims and survivors appear later : as context, as reaction, as proof that something bad happened. That structure isn’t neutral. It reproduces the hierarchy of the harm itself.

Centering burden-bearers isn’t a fairness question. It’s a reporting accuracy question. The people who live with the long-tail consequences of these systems understand them differently, and often more completely, than the people producing them. Their testimony is evidence. Not color.

What this looks like in practice

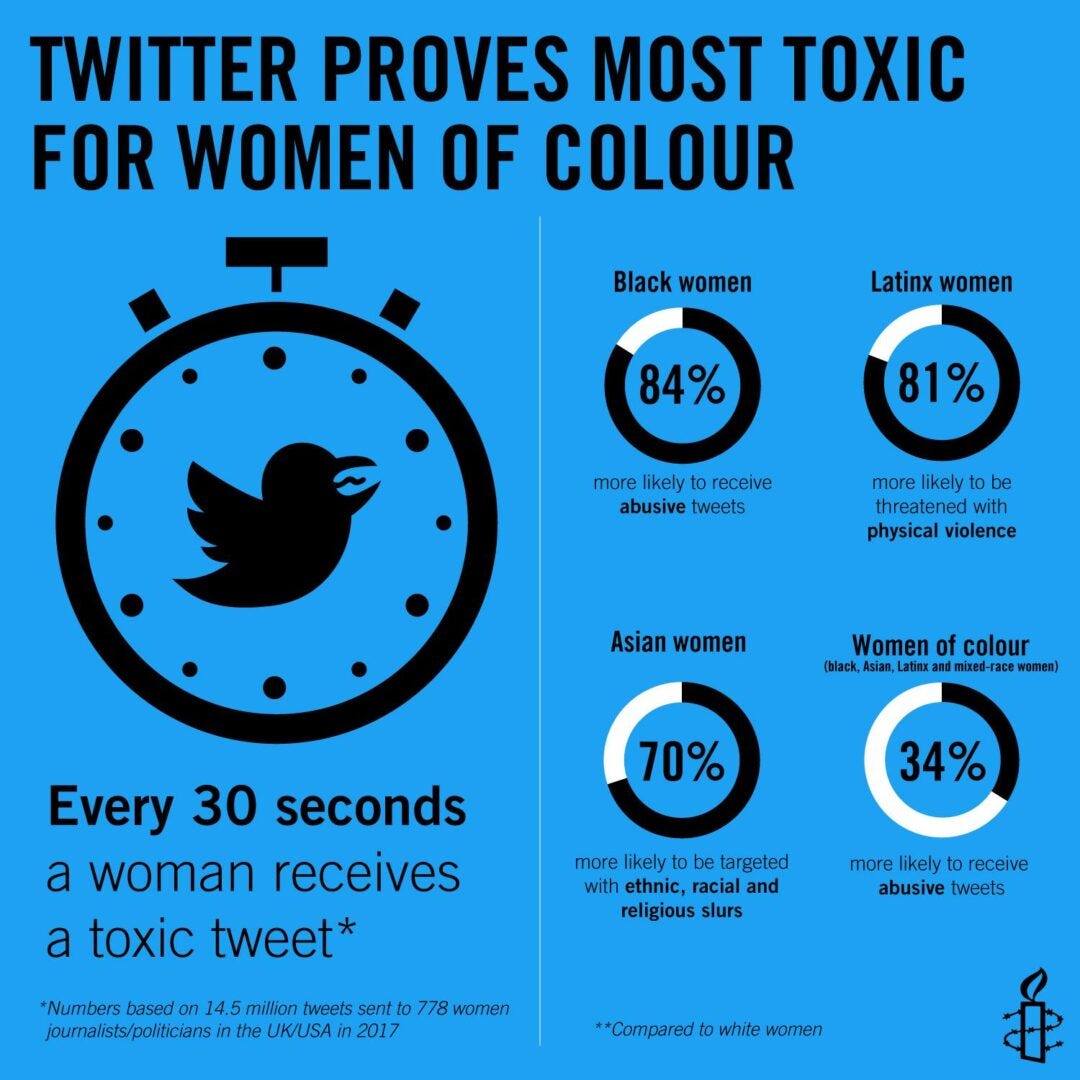

Amnesty International's Toxic Twitter research didn’t focus on what abusers said, it focused on what the abuse cost. Women changed how they used platforms. Many withdrew from public life online. Some feared for their physical safety offline. The damage was measurable, documented, and largely absent from coverage that kept returning to perpetrators and their rhetoric. That research made harm legible in terms institutions could act on. It named the hidden tax. It showed the invisible majority.

Editorial test:

Who has the most to lose in this story? Are they present with name, context, and consequence in the first third of your piece?

If not, you’re reporting the fire. Not the building that keeps catching.

Field note on source relationships

Burden-bearers are often sources who've been burned by coverage before: used as a single quote, stripped of context, left exposed after publication with no follow-up. The relationship requires more than a consent form. It requires transparency about how the material will be used, what decisions you'll make about their identification, and what your obligations are after the piece goes live. Phase 2 of this guide addresses this in depth, specifically, how to handle sources who are also subjects, and what rigor looks like without cruelty.

Toolkit 3: Reaching burden-bearers and protecting them

Secure communications are baseline: SecureDrop for newsrooms; Signal with disappearing messages for individual reporters. The Freedom of the Press Foundation's operational security guides are shareable with sources, not just practitioners. For community-based sourcing, the antidote to the parachute model, build relationships with survivor advocacy groups, mutual aid networks, and community organizations before you need them for a story. Meedan's Check enables collaborative verification with input from affected communities, valuable for content in languages or contexts outside a reporter's fluency. And the Dart Center for Journalism and Trauma carries peer-reviewed, practitioner-tested guidance on trauma-informed practice. It belongs in your working reference, not just your reading list.

*See my full guide and series for the deeper breakdown of this case and related tools.

What these three shifts have in common

They all require resisting the pull toward what is easiest to see, film, quote, and sell.

That pull is real. Editors want the hook. Platforms reward the clip. Audiences click on conflict. None of that is going away. And none of it is an excuse, either.

These shifts aren’t about producing slower journalism or quieter journalism. They’re about producing journalism with a longer half-life: stories that build public understanding instead of borrowing its attention.

Phase 1 of this guide is conceptual. It changes how you frame the story before you report it. The shifts in Phases 2, 3, and 4 build on this foundation: rigor without cruelty, structure that makes systems visible rather than personalities legible, and the sustained practice of doing this work inside institutions that don’t always reward it. A story with the right frame but weak verification is still a weak story. These phases are cumulative. Each one assumes the work of the one before.

Because I care—and because I want us to win—here are two bonus shifts to carry with you from Part I before you start reporting: treating virality as distribution evidence, and placing the incident within the larger pattern. Both are about closing the gap before the first word is written.

Killing Spectacle (Part I-Continued): Framing the Story — Two Bonus Shifts Before You Report on Online Harm and Extremism

The Field

The practices above are easier to sustain inside a community of people working on the same problems than alone. That’s what The Field is: a cross-sector community of practice for journalists, researchers, advocates, lawyers, and technologists working at this intersection, built on the principle that the people with the most situational knowledge are often those with the least institutional power, and that better practice requires closing that gap deliberately. If this is your beat, wherever you are, it’s yours to join.

Join The Field: Fill out the intake form or reach out to me directly at inquiries@rajaalthaibani.com

The Frontier

These three shifts will make individual stories better. They won’t fix the structural conditions that make better journalism hard to sustain: the revenue models that reward speed over depth, the synthetic media environment that is actively contaminating the evidentiary record, and the reach gap that means the reporting most needed by communities closest to the harm is the reporting least likely to reach them. Each of those problems gets its full treatment in the phase where it becomes most acute, synthetic media and verification in Part 2, the reach gap in Part 3, the revenue question in Part 4. Naming them here is a promise, not a deferral. The frontier is the reason the guide exists. Craft alone doesn’t close it.

Up next— Killing Spectacle, Part 2: Rigor without cruelty.

How to verify claims in spaces designed to mislead, including a synthetic media environment where the detection tools are always one cycle behind the fakes. How to handle sources who are also subjects, and what informed consent actually looks like as an ongoing practice rather than a one-time form. And the specific problem no one talks about clearly enough: what you do with evidence you can verify but cannot safely publish, and how that shapes what you can responsibly claim.

Part 2 of this guide is where the frame you built in Part 1 either holds or falls apart. The craft is in making it hold.

Two additional shifts to consider before you start reporting: treating virality as distribution evidence, and placing the incident within the larger pattern. Both are about closing the gap before the first word is written.

Killing Spectacle (Part I-Continued): Frame the Story Right with Two More Shifts (read here)

If this is your beat, or someone you edit, teach, or commission, send it to them directly and invite them to join The Field, our new community of practice. The journalists and media practitioners who need this most are rarely the ones looking for it.

These aren’t universal rules. They’re practices drawn from years in this space. What works in a long-form investigation won’t always survive a breaking news brief. Context matters. This guide is a conversation, leave a comment or reach me directly with what’s missing and what works. What’s useful goes into the guide, with credit.

About the author. Raja Althaibani works at the intersection of media, harm, technology, and accountability— advising, training, investigating, and building tools for practitioners navigating an information landscape that moves faster than most frameworks can follow.

She writes Unembedded, which includes the ongoing series The Safari Is Over ; and founded The Field, a cross-sector community of practice for journalists, researchers, advocates, and technical practitioners working on these beats.

inquiries@rajaalthaibani.com · www.rajaalthaibani.com · Join The Field

Thank you for this thoughtful and resonating piece. The shift from spectacle to system is especially clear and useful.

I’ve been working through a related lens, looking at power as a structured system where political institutions, media, academia, and legal systems are shaped through capture by interdependent blocs such as Israel, the defence industry, financial actors, tech platforms, and energy interests, which reinforce each other through shared incentives and coordinated outcomes. In that sense, media is not outside the system reporting on it, but one of the nodes through which that alignment is reproduced, through ownership concentration, editorial control, and the management of visibility across editorial and platform environments, alongside the enforcement of boundaries through career risk and loss of access.

Reading your piece, I kept coming back to where the newsroom sits within that structure. A lot of what you describe seems to sit at the intersection of editorial practice and institutional incentive, where funding, access, ownership, and career pathways reward certain instincts and discourage others. That suggests the pull towards spectacle is not just something to unlearn, but something produced and reinforced by the same structures that define what can be reported in the first place. I’m curious how you think about applying these shifts when that constraint is present.

I also found myself thinking about how this approach translates in contexts structured as coloniser and colonised or oppressor and oppressed, particularly in Palestine. In those cases, the system is an alignment with Israel’s interests, including military supply, diplomatic protection, legal shielding, and media framing that sustain and normalise its demographic engineering and continued expansion. Even system-level reporting often begins from terms like “conflict”, which flattens power, “self defence”, which legitimises state violence, or “terrorism”, which criminalises resistance, all of which shape what the system appears to be before reporting begins.

I recently tried to work through something similar in a piece on Palestine, by taking questions like “do you condemn Hamas?” or “does Israel have a right to defend itself?” and stripping them back to the assumptions they impose. It made me wonder whether system-led reporting also has to break the frame those questions sit within first, otherwise the system itself is only ever partially visible.