Killing Spectacle (Part 1- Continued): Framing the Story — Two Bonus Shifts Before You Report on Online Harm, Extremism, and AI abuse

Why virality lies, why single incidents distort, and how to report scale before the platform assigns the story for you

Part 1 covered the first three shifts media practitioners should make before reporting— pitch the system, report the consequence, center the burden-bearers. Here are two more bonus shifts to carry with you. Because I care, and because I want us to win.

From Killing Spectacle: 15 Shifts for Reporting on Online Harm and Extremism, a guide for journalists, editors, producers, researchers, and media practitioners. Published here in parts. Each installment stands alone. The full picture builds across the series.

Start here: Part 1: Frame the Story Right .

Here are two more shifts to make in our daily practice to help us kill the ‘spectacle’ in ‘spectacle journalism’.

My first piece published here covered first three mindset and technical shifts, before reporting: pitch the system not the spectacle, report consequence not conflict, center the burden-bearers not the perpetrators. Those shifts are about what story you’re telling and whose experience is at the center of it.

These two are about something that happens before any of that, reading the information environment you’re working inside before you let it assign the story for you.

On beats covering online harm, extremism, and AI abuse, the platform is not a neutral surface. It is an active editorial force. It decides what looks significant. It manufactures the appearance of consensus. It makes fringe content look mainstream and widespread harm look marginal. Journalists who don’t account for that are not reporting reality. They are reporting the platform’s version of reality, which is designed, in specific and documented ways, to serve interests other than public understanding.

These two shifts are about closing that gap before the first word is written, or first raw video is collected, edited, or clipped.

Shift 4: Virality is evidence of distribution, not importance

Unlearn: Treat trending content as proof that something matters.

Relearn: Virality tells you something was distributed at scale. It tells you almost nothing about whether it reflects reality.

One of the most corrosive habits in covering online harm and extremism is treating visibility as proof. A piece of content goes viral. It appears in your feed, your editor’s feed, your source’s feed. It feels significant because it is everywhere.

But everywhere on a platform is an editorial decision made by an algorithm, not a measure of cultural reality, social consensus, or genuine threat. Platforms are built to surface conflict and blur the line between significance and visibility. Journalism walks straight into that trap when it treats the trending tab as an assignment editor.

Urgency and importance are not the same thing. A piece of content can reach ten million people and reflect almost nothing about how those people actually think, behave, or live. A pattern that affects millions can be entirely invisible on a platform because it doesn’t produce the engagement mechanics that make content travel.

The shift is learning to ask:

is this visible because it is significant, or does it seem significant because it is visible?

What this looks like in practice

The 2024 tradwife surge looked, from inside the feed, like a mass cultural shift: a generation of women returning to domestic roles. Mainstream coverage treated it that way. Research from Monash University and the International Journal of Communication found no evidence of that in behavioral data. What the data showed was that recommendation algorithms were artificially funneling users toward increasingly extreme gender content. The trend didn’t reflect what women wanted. It reflected what the platform rewarded and amplified. Reporting it as a cultural shift normalized extremist content through perceived popularity, exactly the outcome the amplification was designed to produce.

The same failure appears constantly on extremism beats. Coordinated seeding makes fringe content look mainstream. Manufactured outrage cycles make marginal figures look culturally significant. The platform’s engagement mechanics are not neutral. They are specifically designed to surface the most inflammatory version of any story. Treating that surface as reality is a reporting failure, not just an editorial one.

Editorial test:

If this were not trending, would you still assign it?

If the answer is no, you are covering distribution, not reality.

If this incident had never been filmed, clipped, or algorithmically amplified, would it still matter?

If the answer is yes, that is where the story begins.

Field note on extremism and online harm beats specifically:

On these beats the virality problem is often deliberate, not incidental. Bad actors engineer viral moments specifically to manufacture the appearance of consensus, to make a fringe position look mainstream, a marginal figure look culturally dominant, an isolated incident look like a pattern. The tradwife surge was organic platform mechanics producing a distorted picture. Coordinated extremist content seeding is the same distortion produced intentionally.

The verification question is the same in both cases:

is this visible because it is significant, or significant because it is visible?

Before escalating any trending story on these beats, ask whether the spike is organic or cluster-driven. The answer changes what you’re actually covering.

Toolkit 4: Auditing virality before you escalate

Before escalating any trending story, run this test:

Does this affect policy, safety, public resources, or a meaningful number of people beyond the feed?

If not, you are covering distribution.

For checking whether a spike is organic or coordinated: Junkipedia tracks coordinated inauthentic behavior and platform manipulation; the Stanford Internet Observatory publishes platform-specific research on amplification mechanics; CrowdTangle (now Meta Content Library) and Brandwatch track cross-platform spread patterns.

For situating platform trends in behavioral reality: look for data that exists outside the platform such as surveys, behavioral research, policy data, academic studies that either corroborates or contradicts what the platform surface suggests. Monash University’s platform research and the Reuters Institute’s Digital News Report are both useful starting points for cross-referencing platform visibility against real-world data. Also check out Bellingcat’s Online Investigation Toolkit.

Newsworthiness test before escalating any trend:

does this have policy effects, safety implications, measurable behavioral uptake, or institutional consequences outside the platform?

If it scores zero on all four, it is a distribution story, not a significance story.

See my full guide and series for the deeper breakdown of this case and related tools.

Shift 5: Situate the incident in the pattern

Unlearn: Let one vivid case carry the argument about scale.

Relearn: Every incident needs a baseline, a trend line, and proportionality ( in both directions).

One vivid case can distort an entire public conversation. This happens constantly in coverage of online harm, extremism, and AI abuse: a single incident is treated as a trend, a trend is treated as a crisis, a crisis is reported without baseline data. Audiences end up with inflated fear, or false reassurance, and no way to judge what is actually escalating versus what is simply newly visible.

The proportionality failure runs in both directions, and both directions cause harm.

Inflating rare incidents produces moral panic, bad policy, and the kind of fear that bad actors deliberately manufacture. Minimizing widespread patterns produces public blindness to structural harm that is hiding in plain sight. Both failures serve bad actors: the first by manufacturing urgency around marginal threats, the second by providing cover for systemic ones.

Reporting the pattern means reporting both directions with equal rigor. How common is this, really? Is it rising, falling, or newly visible? Is this incident exceptional or representative? What would a reader need to know to make an accurate judgment about the scale and nature of the harm?

What this looks like in practice

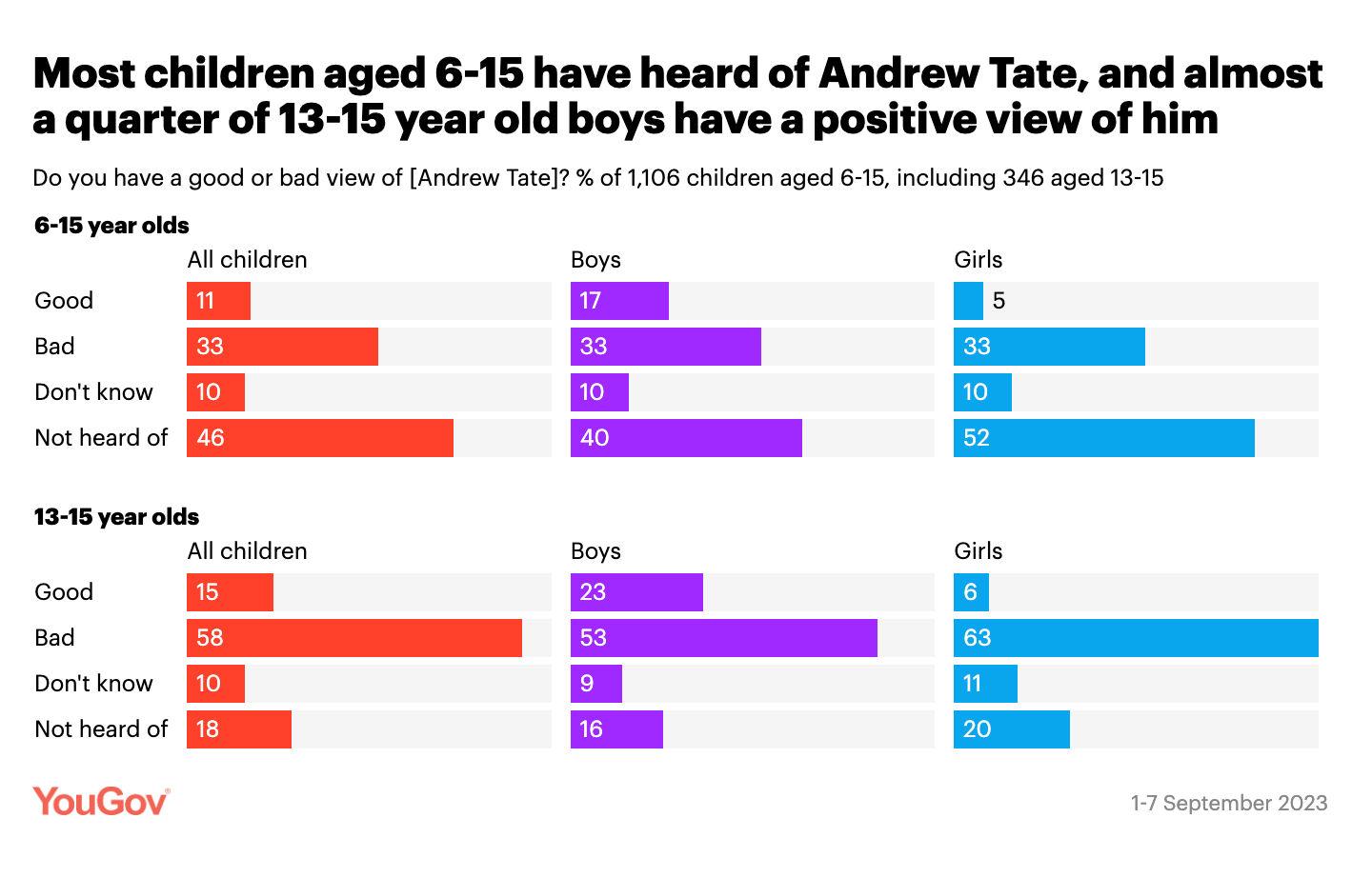

Coverage of incel-linked violence regularly treats isolated attacks as evidence of a mass movement, inflating a rare incident into a pattern that the data doesn’t support. Coverage of Andrew Tate’s reach did the inverse: the Hope Not Hate finding that 8 in 10 boys aged 16 to 17 had seen his material, and the YouGov finding that 1 in 6 boys aged 6 to 15 held a positive view of him, were treated as fringe statistics in coverage that hadn’t done the work of situating the numbers. That is not a fringe statistic. Reporting it as one was a failure of proportionality in the opposite direction, minimizing a documented, widespread pattern because the number was uncomfortable to report at its actual scale.

The Marshall Project’s context-first reporting on crime data is the model for how to do this right: every incident is situated in the baseline, every trend is measured against historical data, and the story names clearly what the data shows and what it doesn’t. One dramatic incident never distorts the national picture because the national picture is always present in the reporting.

Editorial test:

Are you showing readers where this event sits in the real pattern — or are you allowing vividness to substitute for scale? Can you state, specifically: is this incident typical, exceptional, rising, falling, or simply newly visible?

If you can’t answer that question before you file, the context is missing.

Field note on these beats specifically:

The proportionality question is weaponized on both sides of these beats. Extremist actors inflate their own significance to appear more powerful and culturally dominant than they are. Treat their visibility claims with the same skepticism you’d apply to any source with an incentive to exaggerate. Platform harm is simultaneously minimized by the institutions that produce it. Treat their framing of harm as marginal or exceptional with the same skepticism. Your job is to find the actual baseline, which usually requires going outside the platform, outside the institution, and outside the most visible sources on both sides.

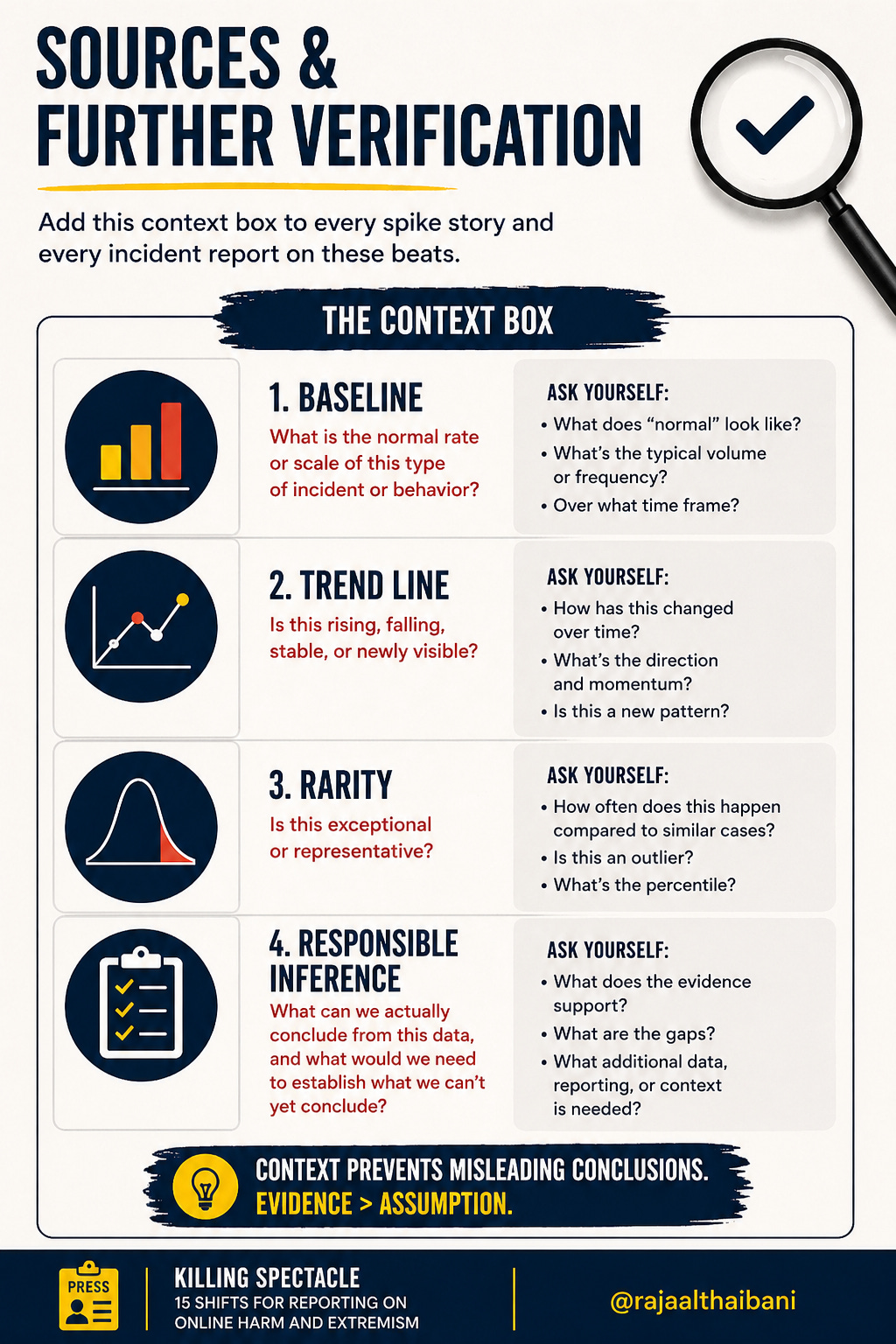

Use the context box. Add this to every spike story and every incident report on these beats:

Baseline: what is the normal rate or scale of this type of incident or behavior?

Trend line: is this rising, falling, stable, or newly visible?

Rarity: is this exceptional or representative?

Responsible inference: what can we actually conclude from this data, and what would we need to establish what we can’t yet conclude?

That box, applied consistently, prevents a single dramatic case from doing the work that evidence should do.

Toolkit 5: Context tools for situating incidents in pattern

For baseline and trend line data on online harm and extremism: the Global Network on Extremism and Technology (GNET) publishes research specifically designed to provide contextual data for journalists; the Center for Countering Digital Hate (CCDH) tracks platform harm at scale; Hope Not Hate’s annual State of Hate report provides UK and European baseline data on extremism reach and penetration.

For AI harm and platform data: the Stanford Internet Observatory, the Oxford Internet Institute, and the Alan Turing Institute all publish research that provides the baseline data most platform coverage lacks. Use them before you file, not after.

For proportionality in both directions: the Context Box above. Apply it to every spike story. If you can’t fill in all four fields, you don’t have enough information to report the incident as evidence of a pattern.

Pro tip: add the context box to every spike story: baseline, trend line, rarity, and what we can responsibly infer. It takes ten minutes and prevents the single most common framing failure on these beats.

See my full guide and series for the deeper breakdown of this case and related tools.

What these two shifts have in common, and how they complete Phase 1 (shifts and tips before reporting)

Both shifts are about the same underlying discipline: not letting the platform assign your story.

Shift 4 asks whether what is visible is actually significant. Shift 5 asks whether what is significant is being accurately sized. Together they close the two most common framing failures on these beats: treating virality as cultural evidence, and treating a single incident as proof of a pattern.

Combined with the first three shifts, Phase 1 is now complete. You know what story you’re telling (the system, not the spectacle), whose experience is at the center (the burden-bearers, not the perpetrators), what you’re following (consequence, not conflict), whether the platform signal is real (Shift 4), and whether the scale is accurate (Shift 5).

Phase 2 begins where Phase 1 ends. Once you know what you’re covering, how you gather and weigh evidence determines everything.

Please share your favorite tips and any additional shifts you think we should be making as media and human rights practitioners reporting on online harm. The shifts, tips and toolkits in this series are non-exhaustive, and are serve as a launching pad for this community, which includes YOU, to extract from as well as contribute to.

Because we care. And because we want to win. Collectively.

The Field

The practices above are easier to sustain inside a community of people working on the same problems than alone. That’s what The Field is: a cross-sector community of practice for journalists, researchers, advocates, lawyers, and technologists working at this intersection, built on the principle that the people with the most situational knowledge are often those with the least institutional power, and that better practice requires closing that gap deliberately. If this is your beat, wherever you are, it’s yours to join.

Join The Field: Fill out the intake form or reach out to me directly at inquiries@rajaalthaibani.com

The Frontier

Phase 1’s specific frontier question is the platform transparency problem: you can’t accurately situate incidents in pattern or audit virality if the platforms controlling the data don’t share it. Shifts 4 and 5 both require external data sources precisely because platform data is inaccessible, unreliable, or strategically curated. The EU Digital Services Act and US platform accountability efforts are the most significant structural responses to this problem, and both are currently contested, partially implemented, and under pressure. Naming that gap is part of what this guide does. Closing it requires more than better journalism. It requires platform accountability infrastructure that doesn’t yet exist at the scale these beats require.

Up next — Killing Spectacle, Part 2: Rigor Without Cruelty

Phase 1 is complete. Phase 2 is where the frame you built either holds or falls apart.

How to verify claims in spaces designed to mislead — including how to slow down when emotion spikes and bad actors are deliberately manufacturing urgency. How to build an evidence hierarchy rather than a transcript of competing claims. And how to use AI to see what only becomes visible at scale, rather than to produce faster noise.

Part 2 is where the craft gets specific. The frame is only as good as what you can prove.

If this is your beat — or someone you edit, teach, or commission —send it directly and invite them to join The Field. The journalists and media practitioners who need this most are rarely the ones looking for it.

These aren’t universal rules. They’re practices drawn from years in this space. What works in a long-form investigation won’t always survive a breaking news brief. Context matters. This guide is a conversation, leave a comment or reach me directly with what’s missing and what works. What’s useful goes into the guide, with credit.

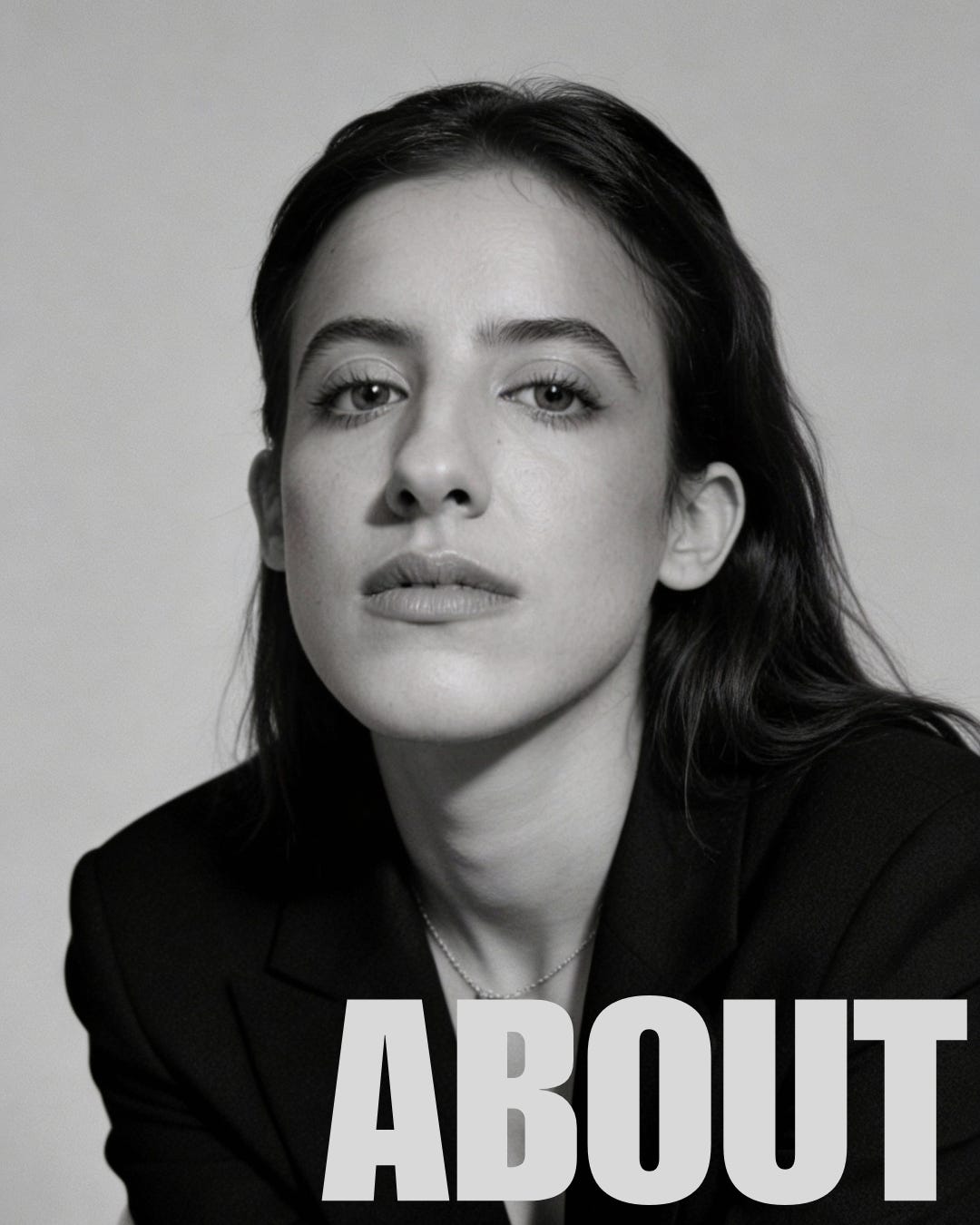

About the author. Raja Althaibani is an advisor, strategist, and trainer working at the intersection of human rights, media systems, digital evidence, and emerging technology. With over 15 years of experience across the Middle East, North Africa, and the United States, she has worked with journalists, civil society organizations, and international institutions to document harm, build accountability processes, and adapt human rights practice to shifting technological and political realities. She previously worked at WITNESS, the human rights organization specializing in video and digital documentation. She is the founder of Arab Hyphenated.

She writes Unembedded, which includes the ongoing series The Safari Is Over, and founded The Field, a cross-sector community of practice for journalists, researchers, advocates, and technical practitioners working on these beats.

inquiries@rajaalthaibani.com · www.rajaalthaibani.com · Join The Field

killing spectacle | media guide | virality | platform accountability | online harm | extremism | framing | Raja Althaibani